Architecture is fate.

This quote hung heavy in my mind as we set out to design the first production version of Speedscale. I can’t find a direct attribution but it was repeated to me by a serial unicorn entrepreneur who has led teams through the full lifecycle of product inception to enterprise standard. His core observation is that truly disruptive companies have an unfair architectural advantage that customers really care about and is hard to copy. AWS vs. legacy IT for infrastructure cost. Cloud Data Warehouses vs traditional OLAPs for query speed. Git vs. SVN for large-team coordination.

What architecture do you choose for a new enterprise SaaS solution in 2020?

On-Premise

For the first decade of my career, things were really painful. And by really painful, I mean that we sold on-premise software. We moved “releases” of “software” through an “SDLC” so they could run within customer “data centers.” Remote debugging using stack traces, core dumps and log messages became a fixture of prehistoric life. This practice allowed our customers to control their data and heavily customize their use cases. Unfortunately, the release cadence was slow because enterprises ended up customizing TOO much. Users were generally dissatisfied. The customer’s IT department assumed all the burden of running the software and they rarely upgraded because it was too hard. All in all, life without electricity was a bit challenging but enough candles can really light up a room.

SaaS 1.0

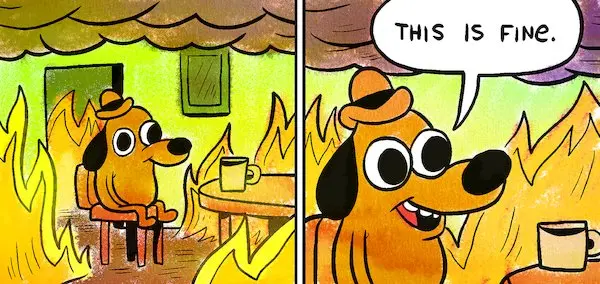

Then, a wondrous new company called Salesforce introduced us to SaaS 1.0. They, and companies they spawned, took us to school on the advantages of automatic upgrades, multi-tenancy, horizontal scaling, low cost shared infrastructure, continuous delivery and a new type of exothermic reaction called “fire.” Following the path tread by consumer companies like Google and Facebook, we pushed as many deploys as we could as fast as possible using canaries, feature flags, blue/green and rollbacks. Infrastructure efficiency was incredible because most resources were shared. Oddly, large enterprises still wanted their persnickety customizations so we painfully figured out how to thread the needle between bespoke and OTS. We thought we finally had the technology to end our dependence on wooly mammoth blubber and defeat the remaining Neanderthals once and for all.

Lawyers… Lawyers… more Lawyers

Then, I was minding my own business building and maintaining a scaled SaaS service when I received a letter from one of our largest clients that started something like this:

A new law called the GDPR, and its spiritual successor the CCPA, loomed on the horizon and our large enterprise customers started embedding draconian legal clauses into our standard contracts. Friendly statements started appearing in MSAs like, “If you are ever breached, we will take good care of your children during your imprisonment.”

Under this law we were classed as a “data subprocessor” meaning we needed to be able to strongly separate customer data, track data lineage and quickly remove sensitive info even if it isn’t PII. This last point is very important because these laws dictate a particular timeframe (like 72 hours) that a vendor has to respond before penalties kick in. The clock starts ticking when the customer receives the notice, so the down stream data processor (this is you) has even less time to react.

After much ridicule at my expense, my chief architect patiently explained that our architecture was designed for shared efficiency, not this crazy new hippie psychedelic privacy trip and I should get out of his office. Many of our core assumptions were now wrong and our cost model was suddenly flipped on its head.

SaaS 2.0 aka Kubernetes Native

To be a good partner to our enterprise customers Speedscale needs to pleasantly cope with:

- Extremely high data volume

- Per customer data isolation

- Horizontal scaling of machine learning workloads (aka Scenario creation)

- Retroactive data scrubbing

- Automatic upgrades of both data pipeline infrastructure and algorithms

- Customer economies of scale when negotiating with Cloud vendors

Many months ago, my cofounders and I called some of our smart friends (incl. Leonid Igolnik of SignalFX/Taleo lineage) and we worked up from first principles to develop our point of view for Speedscale. I’ve got a lot of code to write today so let’s talk through it briefly using someone else’s existing architectural diagrams.

I believe DataBricks has many of the correct elements in their split plane architecture:

To distill out the important bits, the solution is designed to have a control plane that we, the vendor, maintain, while the data plane can reside in the customer’s environment. This architectural decision is advantageous over a pure SaaS solution because it lets the customer maintain control over their data, implement their own data scrubbing policies and negotiate their own deal with their cloud provider to take advantage of scale. Approved.

There’s still one dimension we need to address to delight Speedscale customers – automatic upgrades and vendor maintenance of the control plane runtime environment. Kubernetes has all the right bones to make that easy but it isn’t specifically tailored for that use case. Conveniently, a company exists that has reached many of the same conclusions and they describe their solution pretty well:

Many large enterprises are limiting their data loss exposure by running their app within a VPC and eschewing the use of Cloud services in favor of self-hosted OSS. For some highly sensitive workloads that’s necessary but we believe there is a better path for others. We’ve architected Speedscale with a split plane architecture that runs in a customer-hosted Kubernetes cluster with automatic updates. We believe this provides the right balance of data protection and easy maintenance for our enterprise administrators.

————–

Many businesses struggle to discover problems with their cloud services before they impact customers. For developers, writing tests is manual and time-intensive. Speedscale allows you to stress test your cloud services with real-world scenarios. Get confidence in your releases without testing slowing you down. If you would like more information, schedule a demo today!